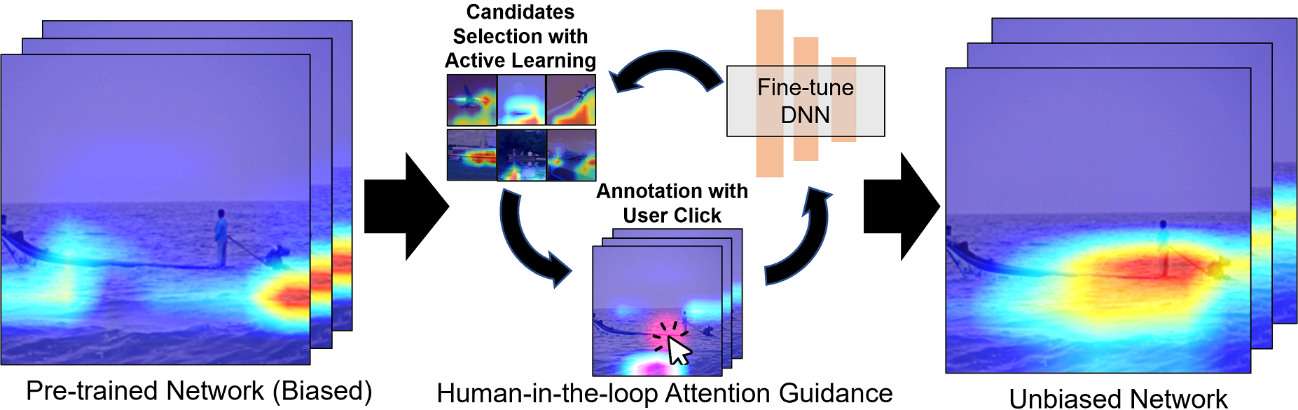

By designing a single-click attention-directing user interface and a specially designed active learning strategy, this system can train DNNs more accurately and efficiently. Image Credit: Haoran Xie from JAIST.

One of the most famous AI structures involves the use of deep neural networks (DNNs).

Such structures attempt to imitate the neural function and connections of the brain and are trained normally on a dataset before they are deployed in the real world. By training them on a dataset ahead of time, DNNs can be “taught” to determine features present in an image. Hence, for example, a DNN might be taught to determine an image with a boat in it by being trained on a dataset of images with boats.

However, the training dataset could result in issues if it is not designed correctly. For example, concerning the earlier example, since images of boats are normally taken when the boat is in water, the DNN might identify only water rather than the boat and still say that the image has a boat in it.

This is known as a co-occurrence bias, and it is a very common issue that has been found while training DNNs. For this issue to be resolved, the research group, including Yi He, a researcher from Japan Advanced Institute of Science and Technology (JAIST), Senior Lecturer Haoran Xie from JAIST, Associate Professor Xi Yang of Jilin University, Project Lecturer Chia-Ming Chang of the University of Tokyo, and Professor Takeo Igarashi, has reported a new human-in-the-loop system.

A study elaborating on this system has been reported in the Proceedings of the 28th Annual Conference on Intelligent User Interface (ACM IUI 2023).

There are some existing methods to solve the co-occurrence bias by either reorganizing the dataset or telling the system to focus on specific areas of the image. But reorganizing the dataset can be very difficult, while current methods for marking regions of interest (ROI) require extensive, pixel-by-pixel annotations by humans hired to do so, which incurs a high cost.

Haoran Xie, Professor, Japan Advanced Institute of Science and Technology

Xie added, “Thus, we created a much simpler attention method which helps humans point out ROI in the image using a simple one-click method. This drastically reduces time and costs for DNN training, and thus, deployment.”

The team discovered that earlier methods for attention guidance were ineffective since they were not intended to be interactive. Hence, the new interactive technique was able to analyze images with one click.

It is enough if users just simply left-click on parts of the image that are to be determined and, if need be, right-click on parts of the image that must be overlooked. Hence, in the event of the images with boats, users will right-click on the water around it and left-click on the boat.

This assists the DNN in identifying the boat better and decreases the effects of the co-occurrence bias innate to training datasets. To decrease the images that require to be annotated, a new active learning strategy with the help of a Gaussian mixture model (GMM) was designed.

This newly developed system was tested against the present ones, both numerically and via user surveys. The numerical analyses displayed that the new active learning technique was highly accurate compared to any of the present ones, while user surveys displayed that the click-based system decreased the time needed to annotate ROI by 27%, and 81% of the participants liked it over other systems.

Our work can drastically improve the transferability and interpretability of neural networks by increasing their accuracy for real-world applications. When systems make correct and clear decisions, it increases the confidence users have in AI and makes it easier to deploy these systems in the real world.

Haoran Xie, Professor, Japan Advanced Institute of Science and Technology

Xie concluded, “Thus our work focuses on increasing the trustworthiness of DNN deployments, which can have a major impact on the application and development of AI technologies in society.”

The research group believes their work could have a strong impact on the tech industry and allow more applications of AI technologies shortly. In today’s quickly developing world, this is considered to be a significant contribution.

Journal Reference:

He, Y., et al. (2023) Efficient Human-in-the-loop System for Guiding DNNs Attention. Proceedings of the 28th Annual Conference on Intelligent User Interface. doi.org/10.1145/3581641.3584074.