Semidynamics has just announced a RISC-V Tensor Unit that is designed for ultra-fast AI solutions and is based on its fully customisable 64-bit cores.

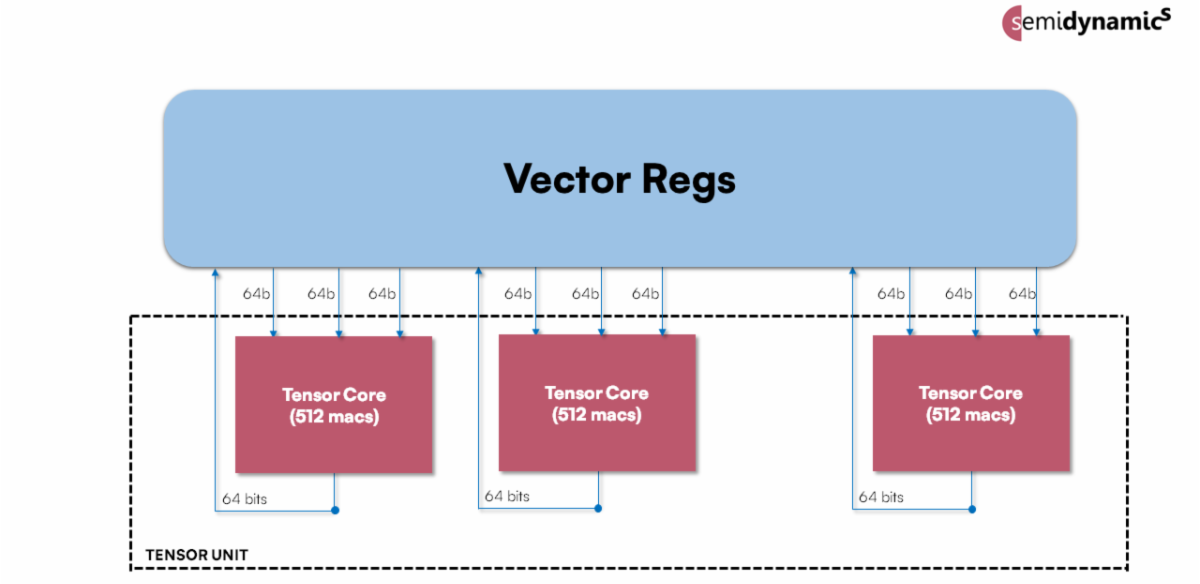

Semidynamics Tensor Unit. Image Credit: Semidynamics

Semidynamics Tensor Unit. Image Credit: Semidynamics

State-of-the-art Machine Learning models, such as LLaMa-2 or ChatGPT, consist of billions of parameters and require a large computation power in the order of several trillions of operations per second. Delivering such massive performance while keeping energy consumption low poses a significant challenge for hardware design. The solution to this problem is the Tensor Unit that provides unprecedented computation power for performance-hungry AI applications. The bulk of computations in Large Language Models (LLMs) is in fully-connected layers that can be efficiently implemented as matrix multiplication. The Tensor Unit provides hardware specifically tailored to matrix multiplication workloads, resulting in a huge performance boost for AI.

The Tensor Unit is built on top of the Semidynamics RVV1.0 Vector Processing Unit and leverages the existing vector registers to store matrices. This enables the Tensor Unit to be used for layers that require matrix multiply capabilities, such as Fully Connected and Convolution, and use the Vector Unit for the activation function layers (ReLU, Sigmoid, Softmax, etc), which is a big improvement over stand-alone NPUs that usually have trouble dealing with activation layers.

The Tensor Unit leverages both the Vector Unit capabilities as well as the Atrevido-423 Gazzillion™ capabilities to fetch the data it needs from memory. Tensor Units consume data at an astounding rate and, without Gazzillion, a normal core would not keep up with the Tensor Unit’s demands. Other solutions rely on difficult-to-program DMAs to solve this problem. Instead, Semidynamics seamlessly integrates the Tensor Unit into its cache-coherent subsystem, opening a new era of programming simplicity for AI software.

In addition, because the Tensor Unit uses the vector registers to store its data and does not include new, architecturally-visible state, it seamlessly works under any RISC-V vector-enabled Linux without any changes.

Semidynamics’ CEO and founder, Roger Espasa, said, “This new Tensor Unit is designed to fully integrate with our other innovative technologies to provide solutions with outstanding AI performance. First, at the heart, is our 64-bit fully customisable RISC-V core. Then our Vector Unit which is constantly fed data by our Gazzillion technology so that there are no data misses. And then the Tensor Unit that does the matrix multiplications required by AI. Every stage of this solution has been designed to be fully integrated with the others for optimal AI performance and very easy programming. The result is a performance increase of 128x compared to just running the AI software on the scalar core. The world wants super-fast AI solutions and that is what our unique set of technologies can now provide.”

Further details on the Tensor Unit will be disclosed at the RISC-V North America Summit in Santa Clara on November 7th 2023.