Apr 12 2017

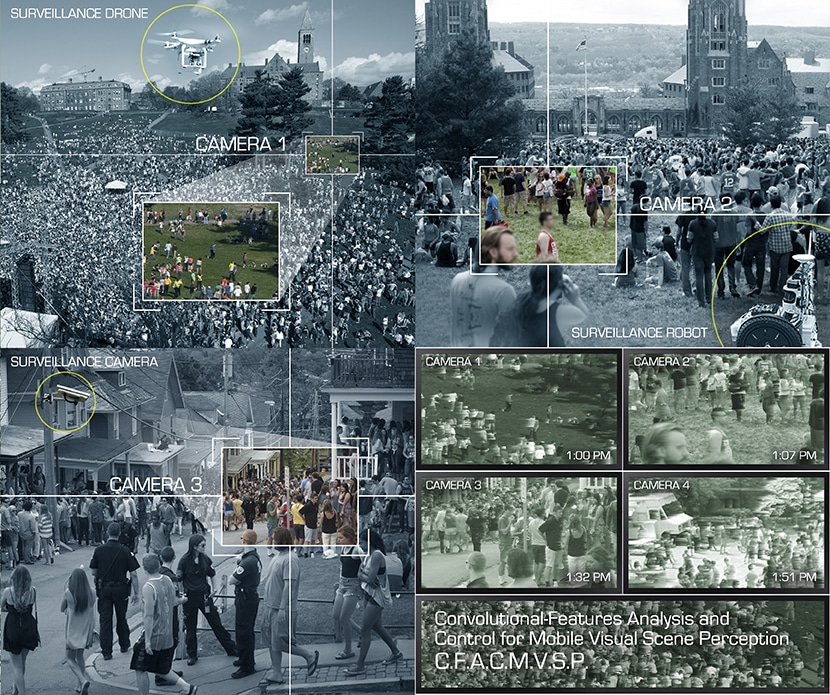

Seeing many possible views of the same area from fixed and mobile cameras could be confusing to a human, but a computer can combine it all, track people and objects and notice significant events. (Credit: Engineering Communications)

Seeing many possible views of the same area from fixed and mobile cameras could be confusing to a human, but a computer can combine it all, track people and objects and notice significant events. (Credit: Engineering Communications)

According to Cornell researchers, the job of monitoring a security camera would be performed better by robots than by humans, due to their ability to communicate at the speed of light and share images.

The researchers are designing a system to enable groups of robots to share information as they move about and, if required, get help in inferring what they see, enabling them to conduct surveillance as a single unit possessing numerous eyes. Other than surveillance, the new technology may help when groups of robots substitute humans when dealing with dangerous tasks, such as cleaning up after a nuclear meltdown, disposing of landmines, or surveying the damage after a hurricane or flood.

Kilian Weinberger, associate professor of computer science, is partnering on the project with Silvia Ferrari, professor of mechanical and aerospace engineering, and Mark Campbell, the John A. Mellowes ’60 Professor in Mechanical Engineering.

Once you have robots that cooperate you can do all sorts of things.

Kilian Weinberger

Their research, “Convolutional-Features Analysis and Control for Mobile Visual Scene Perception", is supported by a four-year $1.7 million grant from the U.S. Office of Naval Research (ONR). The researchers will rely on their extensive expertise with computer vision. They will match and join images of the same area from a number of cameras, identify objects, and track objects and people from one place to another. The task will require revolutionary research, Weinberger said, because previous research in the field has concentrated on examining images from a single camera as it moves about, and frequently, a camera that is stationary. The new system will combine data from mobile observers, fixed cameras, and outside sources.

The mobile observers might include independent ground vehicles, aircrafts and perhaps humanoid robots moving through a crowd. They will transmit their images to a central control unit, which might have access to other cameras observing the region of interest. Additionally, it will access the internet for support with regards to labeling what it observes - for instance, how to open the container, the car brand, or identity of the person.

The system might pick up an important face, then track that face through the crowd, Weinberger put forward. In previous work, Ferrari created what might be defined as a robot game of Marco Polo, where a group of hunters track targets through a difficult environment.

Being aware of the context of a scene, robot observers may sense suspicious actors and activities that might otherwise go undetected. A person running on a college campus may be a common occurrence but in a secured area it may require further inspection.

The central technology is a mixture of “deep learning” that allows a computer to interpret images, and “Bayesian modeling,” which allows it to update its model of the world as new data comes in. The programming will also have a “planning function” to understand how to gain extra data necessary to resolve doubt. It will assist its mobile agents to avoid hurdles and, if needed, guide them to locations where a closer look is required.

While the Navy might position such systems with drone aircraft or other autonomous vehicles, the Cornell team plans primary tests on the Cornell campus. They use research robots to “surveil” crowded areas, whilst gaining an overview from already installed webcams. This work might result in combining the new technology into campus security, Ferrari suggested.

Besides the ONR grant, earlier work by the researchers was supported by the National Science Foundation and the U.S. Department of Energy.