To further understand the RobDact, a research team used computational fluid dynamics and a force measuring experiment. The result was an accurate hydrodynamic model of the RobDact.

Today, underwater robots are employed for a variety of marine operations, including mapping, underwater exploration, and the fishing business.

The majority of conventional underwater robots are propelled by a propeller, which works well for steady-speed cruising in open water.

However, while carrying out a specified mission, underwater robots frequently need to be able to move or hover at modest speeds in choppy waters. In these circumstances, the propellor finds it challenging to move the robot.

The propeller’s “twitching” motion is another consideration when an underwater robot is traveling slowly over turbulent waters. This twitching causes fluid to pulse inexplicably, which lowers the robot’s effectiveness.

Scientists have been working on developing aquatic robots that resemble live organisms. These bionic crafts navigate the water in a manner akin to that of fish or manta rays. These bionic underwater vehicles function more effectively and robustly in the water than conventional underwater propulsion vehicles, while still being environmentally benign.

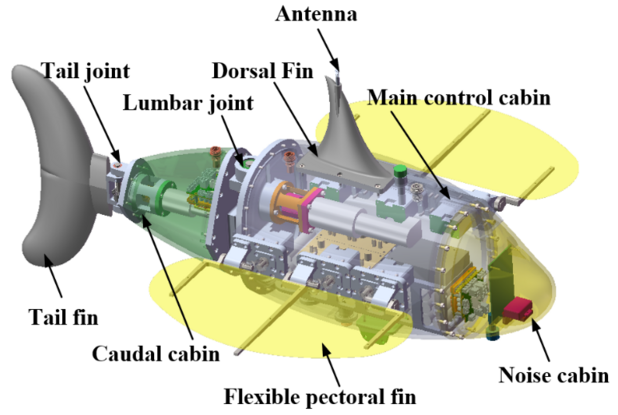

Image Credit: Rui Wang, Institute of Automation, Chinese Academy of Sciences

As they move through the water, the fluid around them influences underwater robots, called the hydrodynamic effect. The robot must contend with unknown water flow and force while traveling in the water, which can lead to needless alterations in the robot’s location.

Researchers require a more realistic hydrodynamic model to better manage the robot. This model’s creation is typically quite challenging and intricate. The model parameters can alter in response to changes in the environment because the real underwater environment is dynamic and challenging to forecast.

In developing hydrodynamic models for underwater robotics, the researchers have used computational fluid dynamics. The models produced by computational fluid dynamics alone, however, are not as accurate and useful as they should be. The study team experimented with a new strategy to solve this problem.

To make the hydrodynamic model more accurate and practical, we combined the computational fluid dynamics and a force measurement experiment.

Rui Wang, Researcher, Institute of Automation, Chinese Academy of Sciences

The researchers determined the parameters of the hydrodynamic model using computational fluid dynamics. Then, to quantify the force produced by the RobDact vehicle, they created a force measurement platform.

“This could help us have a better understanding of the underwater vehicle’s motion state, and control the underwater vehicle more accurately,” said Qiyuan Cao, a researcher at the Institute of Automation, Chinese Academy of Sciences.

The team’s work allowed them to calculate the RobDact’s hydrodynamic force at various speeds. They were able to gauge RobDact’s force in the X, Y, and Z directions because of the force measurement platform.

Through their force measurement studies, they created a mapping link between the RobDact fluctuation characteristics and the vehicle’s thrust. The scientists were able to create a precise and useful hydrodynamic model of the RobDact in various motions by fusing the rigid body dynamic model of the RobDact with the thrust-mapping model.

The hydrodynamic model and artificial intelligence techniques such as reinforcement learning will be used in the scientists’ upcoming research on the intelligent control of bionic underwater vehicles.

“The ultimate goal is to promote the practical application of bionic underwater vehicles in water environment monitoring and underwater search and rescue,” said Wang.

The National Natural Science Foundation of China, Beijing Natural Science Foundation, Youth Innovation Promotion Association (Chinese Academy of Sciences), and Young Elite Scientist Sponsorship Program (China, Association for Science and Technology) all provided funding for the study.