With an increasing demand for automation in the 21st century, robots have had rapid and unprecedented growth across many industries, including logistics, warehousing, manufacturing, and food delivery.

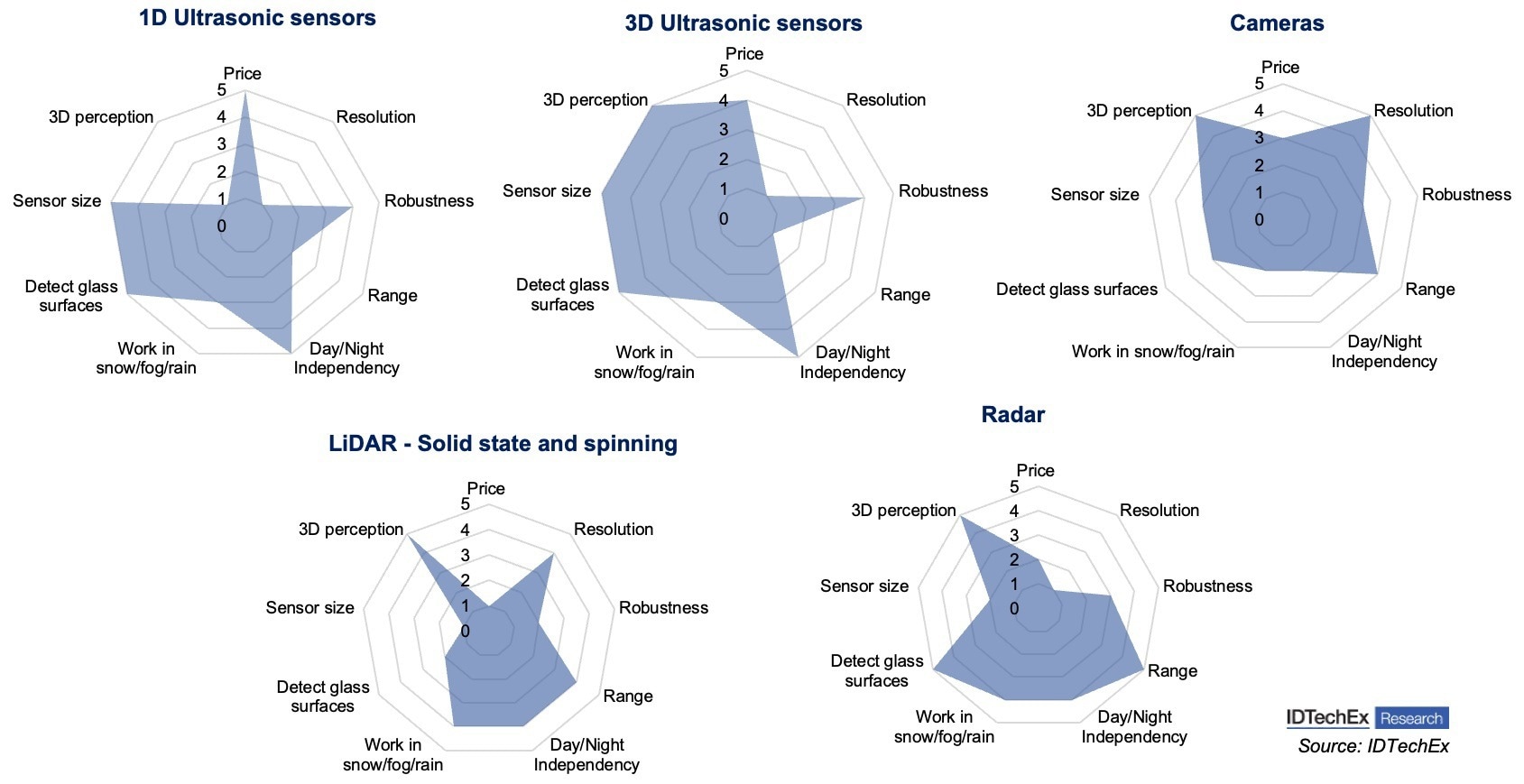

Comparison of several commonly used sensors in robots. Image Credit: IDTechEx

Comparison of several commonly used sensors in robots. Image Credit: IDTechEx

Human-robot interaction (HRI), precise control, and safe collaboration between humans and robots are the cornerstones of adopting automation. Safety refers to multiple tasks in the context of robots, with collision detection, obstacle avoidance, navigation and localization, force detection, and proximity detection being a few examples. All these tasks are enabled by a suite of sensors, including LiDAR, imaging/vision sensors (cameras), tactile sensors, and ultrasonic sensors. With the advancement of machine vision technology, cameras are becoming increasingly important in robots.

Working Principle of Sensors in Robotics – Vision Sensors/Cameras

CCD (charge-coupled device) and CMOS (complementary metal oxide semiconductor) are common types of vision sensors. A CMOS sensor is a digital device that converts the charge of each pixel to its corresponding voltage, and the sensor typically includes amplifiers, noise correction, and digitalization circuits. On the contrary, a CCD sensor is an analog device that contains an array of photosensitive sites. Although each has its strengths, with the development of CMOS technology, CMOS sensors are now widely considered an appropriate fit for machine vision in robots thanks to their smaller footprint, lower cost, and lower power consumption compared with CCD sensors. Vision sensors can be used for motion and distance estimation, object identification, and localization. The benefit of vision sensors is that they can collect significantly more information with high resolution compared with other sensors such as LiDAR and ultrasonic sensors. The diagram below compares different sensors based on nine benchmarks. Vision sensors have high resolution and low costs. However, they are inherently susceptible to adverse weather and lightness; therefore, other sensors are often needed to increase the overall system robustness when robots work in unpredictable weather or difficult terrain. A more detailed analysis and comparison of these benchmarks are included in IDTechEx’s latest report, “Sensors for Robotics 2023-2043: Technologies, Markets, and Forecasts”.

How Are the Vision Sensors Used for Safety in Mobile Robots?

Mobile robotics is one of the largest robotic applications where cameras are used for object classification, safety, and navigation. Mobile robots primarily refer to automated guided vehicles (AGVs) and autonomous mobile robots (AMRs). However, autonomous mobility also plays an important role in many robots ranging from food delivery robots to autonomous agricultural robots (e.g., mowers, etc.) rely on autonomous mobility. Autonomous mobility is an inherently complicated task requiring obstacle avoidance and collision detection.

Depth estimation is one of the key steps in obstacle avoidance. The task requires one or multiple input RGB images collected from vision sensors. These images are used to reconstruct a 3D point cloud with machine vision algorithms, thereby estimating the depth between the obstacle and the robot. At this stage (2023), the majority of mobile robots (e.g., AGVs, AMRs, food delivery robots, robotic vacuum, etc.) are still used indoors, such as in warehouses, factories, shopping malls, and restaurants where the environment is well-controlled with a stable internet connection and illumination. Therefore, cameras can achieve their best performance, and machine vision tasks can be performed on the cloud, significantly reducing the computational power required for the robot itself, thereby leading to a lower cost. For example, cameras are only needed to monitor the magnetic tape or QR code on the floor for grid-based AGVs. While this has been widely used and trendy nowadays, this does not work well for outdoor side-walk robots or inspection robots that work in areas with limited Wi-Fi coverage (e.g., under tree canopies, etc.). To solve this problem, the in-camera computer vision technique is emerging these days. As the name indicates, the image processing is all finished within the cameras. Due to the increasing demand for outdoor robots, IDTechEx believes that in-camera computer vision will be increasingly needed in the long term, especially for those designed to work in difficult terrain and harsh environments (e.g., exploration robots, etc.). However, in the short term, IDTechEx believes that the power consumption nature of onboard computer vision, along with the high costs of chips, will likely hold back the adoption. IDTechEx believes that many robot original equipment manufacturers (OEMs) would prefer to incorporate other sensors (e.g., ultrasonic sensors, LiDAR, etc.) as the first step to enhance the safety and robustness of the environment perception ability of their products.

More detailed analysis of the trend and how different sensors are used together are included in IDTechEx’s latest report, “Sensors for Robotics 2023-2043: Technologies, Markets, and Forecasts”.