Jun 28 2019

Losing a limb, either because of an accident or illness, can pose physical and emotional hardships for an amputee, ruining their quality of life. Prosthetic limbs can be very beneficial but are mostly expensive and cumbersome to use.

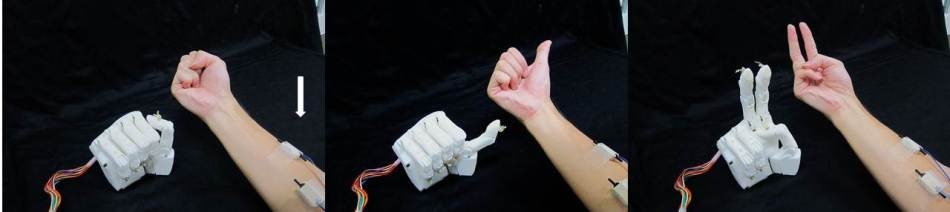

Different hand positions of the prosthetic hand. The prosthetic hand uses signals from electrodes (arrow) and machine learning to copy hand positions. (Image Credit: Hiroshima University Biological Systems Engineering Lab)

Different hand positions of the prosthetic hand. The prosthetic hand uses signals from electrodes (arrow) and machine learning to copy hand positions. (Image Credit: Hiroshima University Biological Systems Engineering Lab)

The Biological Systems Engineering Lab at Hiroshima University has designed a new 3D printed prosthetic hand integrated with a computer interface, which is their most economical, lightest model that is more reactive to motion intent than previously. Earlier generations of their prosthetic hands have been built using metal, which is heavy and costly to manufacture.

Professor Toshio Tsuji of the Graduate School of Engineering, Hiroshima University illustrates the mechanism of this new hand and computer interface using the game of “Rock, Paper, Scissors”. The wearer visualizes a hand movement, like clenching the fist for Rock or a peace sign for Scissors, and the computer attached to the hand integrates the formerly learned movements of all five fingers to perform this motion.

The patient just thinks about the motion of the hand and then the robot automatically moves. The robot is like a part of his body. You can control the robot as you want. We will combine the human body and machine like one living body.

Toshio Tsuji, Professor, Graduate School of Engineering, Hiroshima University

Electrodes in the socket of the prosthetic equipment compute electrical signals from nerves through the skin — akin to how an ECG computes heart rate. The signals are transmitted to the computer, which takes just five milliseconds to arrive at a decision regarding what movement it should be. The electrical signals are then transmitted by the computer to the motors in the hand.

The neural network referred to as Cybernetic Interface allows the computer to “learn”, and was trained to identify movements from each of the five fingers and then join them into diverse patterns to turn Scissors into Rock, grab a water bottle, or to regulate the force used to shake a hand.

“This is one of the distinctive features of this project. The machine can learn simple basic motions and then combine and then produce complicated motions.” Tsuji says.

The equipment was tested by Hiroshima University Biological Systems Engineering Lab on patients in the Robot Rehabilitation Center in the Hyogo Institute of Assistive Technology, Kobe. The scientists also partnered with the company Kinki Gishi to design the socket to house the amputee patients’ arm.

Seven participants were chosen for this research, including one amputee who had worn a prosthesis for 17 years. The participants were told to do different tasks with the hand that simulated everyday life, such as picking up small objects or making a fist. The accuracy of prosthetic hand movements measured in the research for a single simple motion was over 95 %, and complicated, untaught motions was 93%.

However, this hand is not yet complete for all wearers. Using the hand for a prolonged period of time can be tough for the wearer as they must concentrate on the hand position so as to sustain it, which then resulted in muscle fatigue. The team is planning on developing a training schedule in order to make the ideal use of the hand and anticipate it will become an inexpensive alternative on the prosthetics market.